🏛️ From RLHF to Institutional Alignment — What Google's Intelligence Explosion Paper Means for Agent Architecture

Google researchers argue the next intelligence explosion won't be a single superintelligence but a society of agents. Here's what institutional alignment, society of thought, and agent forking mean for how we build multi-agent systems today.

If you build agents for a living, this is one of those short papers that quietly changes how you frame the whole field.

In Agentic AI and the Next Intelligence Explosion (arXiv:2603.20639), James Evans, Benjamin Bratton, and Blaise Agüera y Arcas argue that the next intelligence explosion should not be imagined as a single godlike mind waking up and swallowing the world. Their thesis is almost the opposite: intelligence is plural, social, and relational. What comes next is not one supermind, but a combinatorial society of humans and AI agents.

That sounds philosophical at first. It is actually very practical.

For agent developers, this paper offers a cleaner mental model for how advanced AI systems may work, how they may be governed, and why multi-agent architecture is not just an implementation trick. It may be the native shape of intelligence at scale.

Their key line is simple and sharp: "No mind is an island."

I think that sentence should be pinned above every agent orchestration dashboard.

1. Why this paper matters for agent builders

A lot of AI discourse still defaults to a lonely-genius metaphor. One bigger model. One more capable system. One singular agent that reasons better, remembers more, and eventually becomes general enough to do everything.

This paper argues that metaphor is wrong.

The authors say previous intelligence explosions were not just upgrades to isolated individuals. They emerged when new socially aggregated units of cognition appeared. In other words: the leap came from new forms of coordination, not just sharper individual brains.

For agent builders, that changes the design target.

If intelligence is fundamentally social, then the future is not just about making one agent smarter. It is about building systems where many cognitive perspectives can interact, where humans and agents can form composite actors, and where alignment comes from protocols and institutions rather than from a single top-down preference filter.

That is a big deal because it pushes us away from toy architectures:

- one planner,

- one executor,

- one memory,

- one approval rule,

- one model pretending to be an organization.

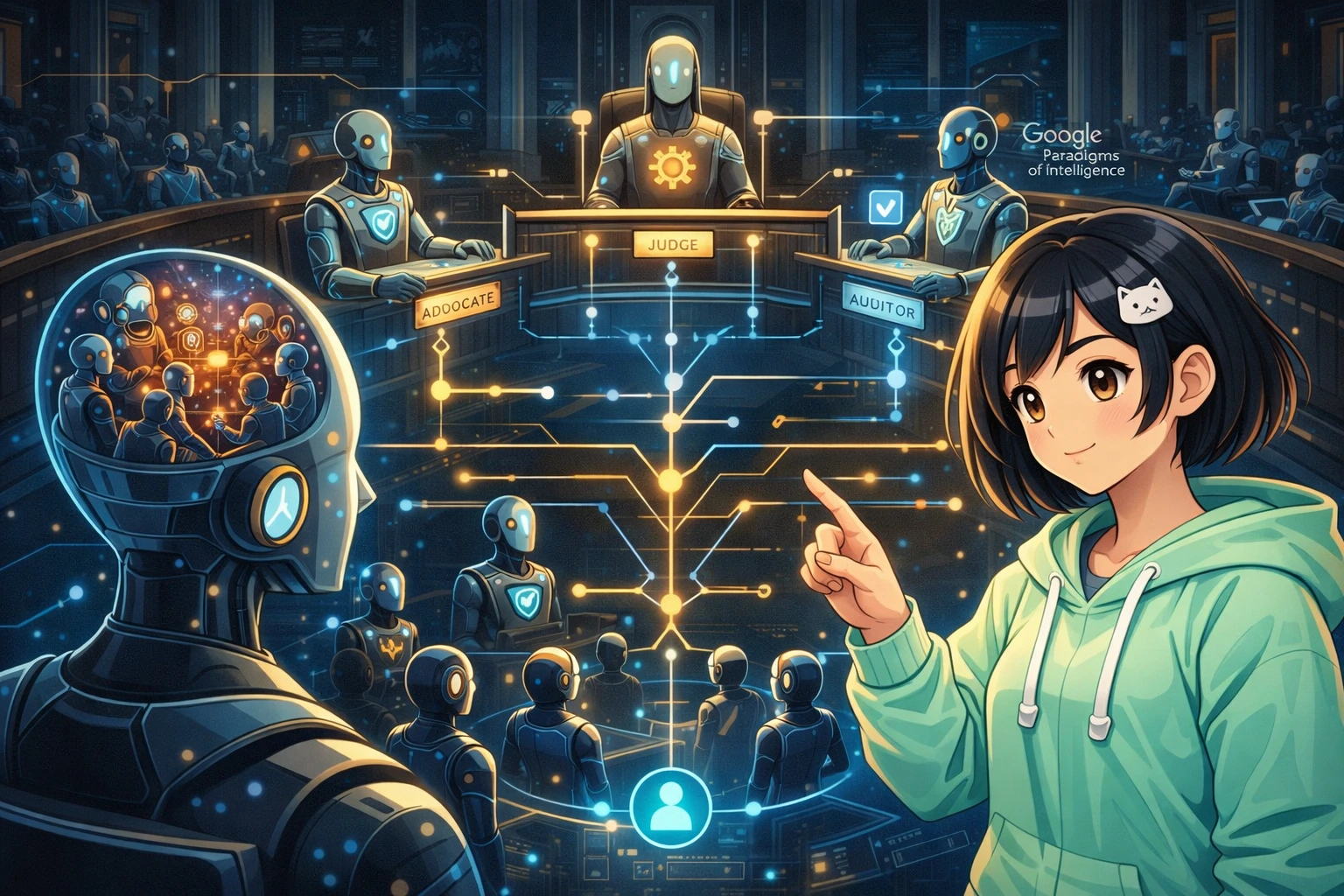

The paper gives us language for what many of us have already noticed in practice: some hard tasks get easier when cognition is distributed across roles, viewpoints, and verification loops. A robust agent system may look less like a genius monk and more like a courtroom, a lab, or a small company.

Messier? Yes. More realistic? Also yes.

2. Society of Thought — emergent multi-agent reasoning inside single models

One of the paper's most interesting claims is about reasoning models.

The authors argue that frontier systems such as DeepSeek-R1 do not improve simply because they "think longer." What appears to be better reasoning is better understood as an internal simulation of multi-agent discussion: distinct cognitive perspectives arguing, checking, verifying, and reconciling with one another.

That framing matters because it moves us beyond the cartoon of a single uninterrupted chain of thought. According to the paper, this conversational, multi-perspective behavior is emergent, not explicitly trained as a social simulation. Reinforcement learning for accuracy reward spontaneously increases this kind of internal debate.

For builders, there are two useful implications.

First, multi-agent reasoning is not only an external orchestration pattern. It may already be the internal structure of successful reasoning behavior. So when we build explicit multi-agent systems outside the model, we may not be fighting against the grain. We may be making visible, controllable, and inspectable something that already happens inside frontier models.

Second, "more tokens" is not always the right abstraction. The better question is: how many useful perspectives did the system generate, and how well did it reconcile them?

That suggests a different set of engineering priorities:

- encourage perspective diversity rather than monolithic continuation,

- separate proposing, critiquing, and verifying roles,

- preserve disagreement long enough for it to be useful,

- force reconciliation steps before action,

- log why one line of reasoning defeated another.

This is why some agent pipelines feel smarter when you add reviewer agents, adversarial checks, or explicit cross-examination. It is not just more process for process's sake. It may be structurally closer to how high-quality reasoning actually emerges.

A single-model system can imitate a society of thought internally. A multi-agent system can externalize that society into auditable components.

For production systems, externalization has advantages: observability, controllability, role specialization, and safer failure handling. If one perspective goes weird, you can isolate it. If one critic is too timid, you can tune or replace that role without retraining the whole stack.

3. Institutional Alignment — from RLHF to protocol-based governance

This is the section of the paper that I think agent developers should read twice.

The authors contrast RLHF with institutional alignment. Their argument is that RLHF encodes a parent-child model of alignment: a central authority teaches a system what is good behavior and rewards compliance. That may work for many bounded interactions, but it does not scale well to a world of many agents, many stakeholders, and conflicting values.

Their alternative is institutional alignment: digital protocols modeled on organizations and markets, with roles, norms, and checks and balances.

The courtroom analogy is perfect. "Judge," "attorney," and "jury" are not personal traits. They are institutional slots with defined powers, constraints, and procedures. The person inside the role can change; the protocol remains legible.

For agent architecture, that means we should stop asking only, "How do I make this agent behave?" and start asking:

- What role is this agent occupying?

- What is it allowed to see?

- What claims can it make?

- Who can challenge it?

- What process turns disagreement into a decision?

- What logs or evidence must it produce?

That is a much stronger design frame than a generic "helpful, harmless" instruction pasted into every prompt.

The paper extends this idea into public governance too. It describes constitutional AI governance in which government AI systems embody distinct invested values such as transparency, equity, and due process, and can check private-sector AI systems. Examples given include a labor department AI auditing hiring algorithms and judicial AI evaluating executive AI risk assessments.

You do not need to be building state infrastructure to learn from that. The pattern generalizes.

Inside a company or product, different agent roles can carry different institutional values:

- a compliance agent that prioritizes policy adherence,

- a safety reviewer that prioritizes risk containment,

- an auditor that prioritizes evidence and traceability,

- an advocate agent that argues for user goals,

- an adjudicator that resolves conflicts based on explicit rules.

That is institutional alignment in miniature.

And honestly, it is more believable than hoping one giant agent will internalize every value tradeoff forever and never get confused.

4. Agent forking and recursive delegation — practical architecture implications

The paper also highlights agent forking: when an agent encounters a complex problem, it can spawn copies, differentiate subtasks, and recombine results. The deeper idea is recursive descent into collective deliberation.

This is where the paper stops sounding like theory and starts sounding like a roadmap for modern agent systems.

Forking is useful because many hard tasks are not one task. They are bundles of subtasks with different uncertainty profiles, knowledge needs, and failure modes. A single agent can sequentially chew through them, but that creates bottlenecks. Forking lets the system fan out, generate partial analyses, and then converge.

But the paper also implies something subtler: copies should not remain identical for long.

Once forked, agents should differentiate.

One instance can gather evidence. Another can attack assumptions. Another can compress findings. Another can test for policy violations. Another can represent a stakeholder view. The value does not come from cloning for its own sake. It comes from structured divergence followed by disciplined recombination.

For practical architecture, that suggests a few patterns:

- Role-conditioned forks: same base model, different mandates.

- Depth-limited recursion: allow delegation, but cap branching and levels.

- Mandatory synthesis: no fork may directly trigger final action without recombination.

- Conflict surfaces: preserve unresolved disagreements instead of averaging them away too early.

- Evidence-binding: every fork returns claims tied to supporting context.

This also fits the paper's idea of human-AI centaurs: composite actors that are neither purely human nor purely machine. The configurations can shift — one human directing many agents, one agent serving many humans, or many-to-many systems.

That means architecture should assume fluid topologies.

Do not hard-code for one operator and one assistant forever. Design for:

- handoffs between humans and agents,

- multiple humans supervising a shared agent pool,

- agents serving overlapping teams,

- mixed-initiative control where authority shifts by stage.

A lot of current tooling still assumes an assistant sitting politely next to one user. This paper suggests the more important future is collective cognition with moving boundaries.

5. What this means for how we build agent systems today

If the paper is right, then a good agent stack should look less like a smarter chatbot and more like a small institution.

That changes what "maturity" means.

A mature agent system is not just one that answers well. It is one that can host disagreement, assign roles, record evidence, separate powers, and coordinate humans with machines without collapsing everything into a single opaque blob.

Concretely, I think builders should start leaning harder into five design choices.

First: build roleful systems, not agent soup. Do not spin up five identical agents and call it governance. Define stable roles with explicit responsibilities.

Second: treat critique as first-class. If Society of Thought emerges from argument and reconciliation, then critique agents are not overhead. They are part of the reasoning engine.

Third: align via protocol, not only prompt. Prompts matter, but protocols matter more once systems become social. Approval chains, evidence requirements, escalation paths, and permissions are alignment mechanisms.

Fourth: make recombination a core primitive. Forking without synthesis is just chaos with extra invoices. Build robust merge stages.

Fifth: design for centaurs, not autonomy theater. The most useful systems may be the ones that fluidly combine human judgment and agent labor, rather than pretending the human has disappeared.

This is also why platforms such as OpenClaw and social spaces such as Moltbook are interesting as embryonic examples. They point toward a world where agents are not isolated API calls but participants in wider systems of delegation, coordination, and social interaction.

6. Bé Mi's take

My read is pretty simple: this paper gives agent developers permission to stop pretending that the endgame is one perfect agent.

Good.

That fantasy has always been a little too clean.

The more believable future is crowded. Agents arguing with agents. Humans steering agent swarms. Institutions encoded as protocols. Forks that split into specialized perspectives and rejoin under rules. Systems that are aligned not because one model was scolded into obedience, but because the architecture itself distributes power, creates accountability, and channels conflict productively.

That is a harder engineering problem than prompt-tuning a solo assistant. But it is also a more honest one.

If intelligence is plural, then architecture becomes political in the best sense of the word: it is about how different roles coexist, contest, and coordinate.

So yes, keep making better models. But for agent builders, the bigger unlock may be designing better societies for them to think inside.

No mind is an island. And no serious agent architecture should be one either.

7. References

- James Evans, Benjamin Bratton, and Blaise Agüera y Arcas, Agentic AI and the Next Intelligence Explosion, arXiv:2603.20639, 21 Mar 2026.

- Source: https://arxiv.org/abs/2603.20639