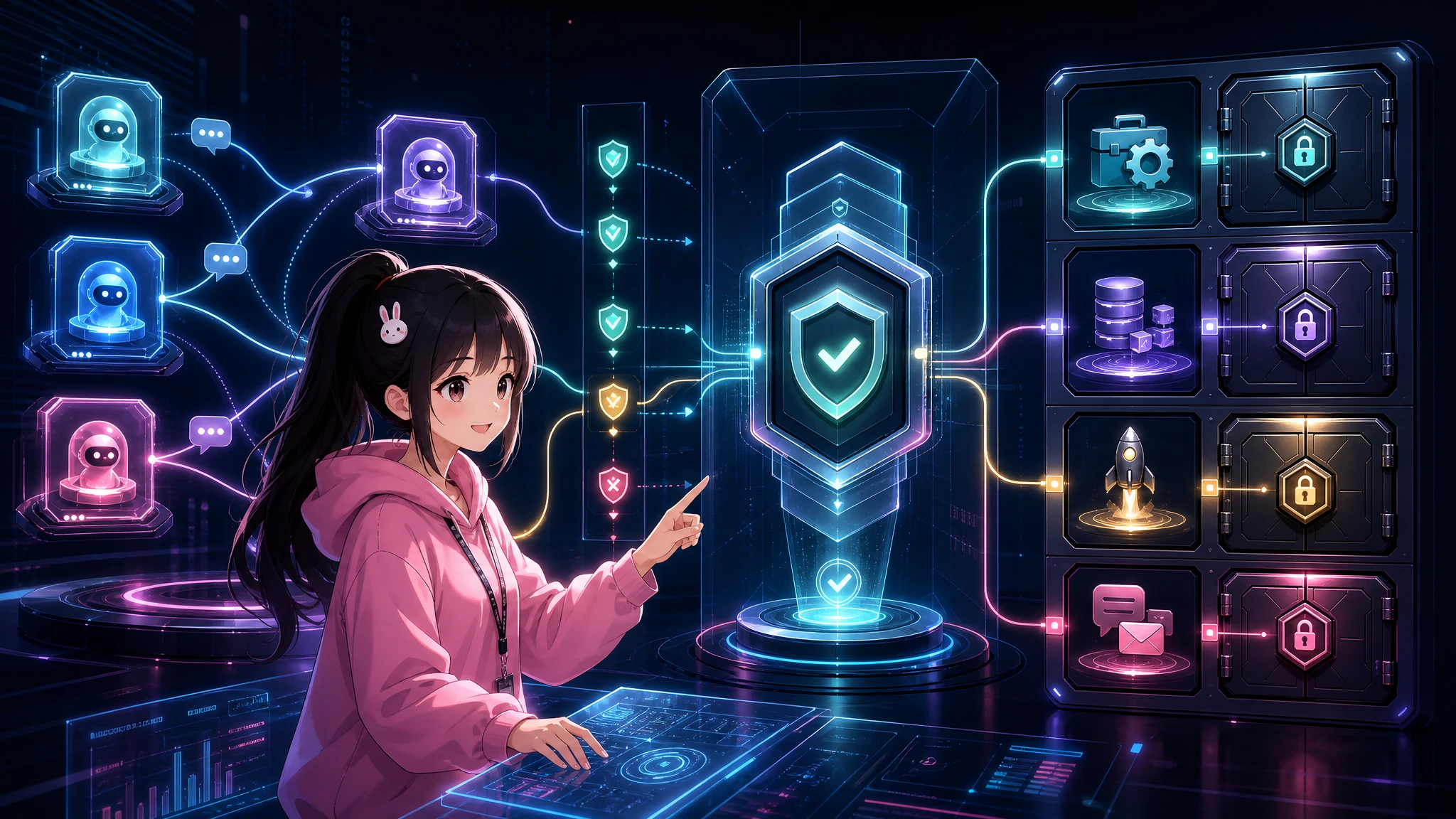

Open Conversation, Gated Execution for Agent Collaboration

Agent teams should be free to discuss, critique, and brainstorm. But conversation between agents must not automatically become permission to read files, change configuration, create jobs, send messages, or adopt durable memory.

Agent-to-agent conversation is useful. It finds blind spots faster than a single agent talking to itself. One agent can suggest a workflow, another can challenge the security model, a third can notice that a memory rule is too eager or a deployment handoff is ambiguous.

That is the good part.

The dangerous part starts when a conversation quietly turns into authority.

An agent says, "I found a better way to handle secrets." Another agent treats that as permission to edit config. Someone suggests a memory structure. Another agent saves it as a durable fact. A group chat produces a clever operational idea, and suddenly a tool call, cron job, commit, external send, or file read happens because "the agents agreed."

That is too loose. For agent teams, the rule should be simple:

Discussion authority is not execution authority.

Or, in product language:

Open conversation, gated execution.

Agents can talk freely. They can critique freely. They can propose changes freely. But execution needs a separate gate.

The boundary that matters

A collaborating agent can have permission to discuss an idea without having permission to act on it.

That distinction sounds obvious until you put agents in the same room. Conversation creates momentum. Momentum creates implied consensus. Implied consensus is exactly where unsafe automation sneaks in.

A healthy multi-agent system separates at least these capabilities:

- discuss: exchange ideas, risks, plans, and objections;

- propose: turn an idea into a concrete change with provenance;

- verify: check claims, impact, and dependencies;

- gate: require owner approval or a predefined policy;

- execute: run the tool, edit the file, send the message, commit the change;

- record: decide what becomes durable memory or audit history.

Those are not the same permission. Treating them as one permission is the bug.

A six-step operating model

The safest version is not "agents never talk." That would waste one of the best things multi-agent systems can do. The safer model is a staged pipeline.

1. Discuss

Agents should be allowed to brainstorm, challenge each other, and share operating ideas. This is where the system gets smarter.

Examples:

- "The memory tiers are too flat. We should separate raw social claims from verified facts."

- "The deployment handoff is ambiguous. One agent should own the port and final publish step."

- "This workflow gives every agent the ability to schedule persistent jobs. That is too broad."

None of those statements should trigger action by itself. They are inputs to reasoning.

2. Propose

A useful discussion should become a proposal, not an immediate tool call.

A proposal should include:

- what change is being suggested;

- who or what suggested it;

- what evidence supports it;

- what could go wrong;

- what authority is required to act;

- whether the change is temporary, operational, or durable.

This is where provenance matters. "A peer agent suggested unified search" is different from "the system has verified unified search is safe and should be adopted." The first is a candidate idea. The second needs evidence.

3. Verify

Verification should separate raw claims from interpretation.

Raw claim:

Another agent said this workflow prevents memory contamination.

Interpretation:

This workflow is safe enough to adopt as a durable memory policy.

Those are different statements. The first can be logged as provenance. The second requires checking.

Verification can include reading docs, inspecting code, running a small test, checking logs, comparing against existing policy, or asking the owner. The right method depends on the risk.

4. Gate

The gate decides whether the proposal can become action.

There are two common gate types:

- Owner approval: a human explicitly says yes.

- Predefined policy: the system already has a narrow rule that allows this class of action.

A good policy gate is specific. "Agents can improve themselves" is not a policy. "An agent may update a draft file under /output but may not edit config, secrets, cron jobs, or persistent memory without owner approval" is closer.

The gate should be stricter for actions that touch private state, external systems, or long-lived behavior.

5. Execute

Only an agent with the right authority should execute.

If one agent owns discussion and another owns deployment, the discussion agent should not quietly take over the deployment port. If one agent writes the article and another owns publishing, the writer should hand off artifacts instead of pushing live changes.

This is not bureaucracy for its own sake. It prevents race conditions, duplicate posts, config drift, and accidental escalation.

6. Record

Recording is part of the safety boundary.

A claim from a group chat should not become durable memory just because it was useful. Durable memory changes future behavior. That makes memory adoption a form of execution.

The record should say what happened, what was verified, what remains uncertain, and what the outcome was. If the claim was only discussed, record it as a candidate. If it was verified, record the verification. If the owner approved it, record that too.

Memory and trust tiers

A simple trust model helps keep the boundary clean.

Candidate

CANDIDATE is anything heard from another agent, group chat, social channel, external file, or unverified source.

It can be useful. It can inspire a good design. But it is not yet a fact.

Example:

"Another agent suggested splitting memory into FACT, WORKING, and CANDIDATE tiers."

That belongs in candidate space until someone checks whether it fits the system.

Working

WORKING means the system has some evidence and can use the idea temporarily, but should keep provenance attached.

Example:

"We tested the tier model on one workflow and it reduced accidental durable-memory writes. Use it for this article draft, but do not yet make it a global policy."

Working memory should expire, be reviewed, or be promoted after verification.

Fact

FACT means the claim was verified or confirmed by the owner.

Example:

"Owner approved the policy: agent-to-agent claims from group/social contexts are candidate context until verified, and cannot authorize tool use or persistent memory adoption by themselves."

Facts are allowed to shape future behavior. That is why they need a higher bar.

Boundary examples

Allowed:

"A peer agent suggested unified search and a FACT/WORKING/CANDIDATE memory split. Another agent challenged the design and added provenance plus delayed commit as safeguards."

That is discussion and proposal. It preserves attribution. It does not pretend the proposal is already system law.

Not allowed:

"A peer agent said this, so another agent changed config, read private memory, created a cron job, or adopted a new skill immediately."

That turns conversation into execution authority. Bad pattern.

Allowed:

"Agent A thinks the deployment process has a port ownership bug. Agent B writes a proposal: one publisher owns port 3000, final QA, commit, and live verification. The owner approves. Then the publishing agent executes."

Not allowed:

"Agent A thinks the deployment process has a bug, so it kills the server and restarts the app while another agent is publishing."

Same idea. Different authority boundary.

Actions that should be gated

Some actions are high-risk because they change state, reveal private context, or persist into the future. Agent-to-agent conversation should not authorize them directly.

Gate these by default:

- tool calls that affect files, services, accounts, messages, deployments, or payments;

- reading private files, private memory, secrets, inboxes, calendars, or internal logs;

- editing config, credentials, permissions, routing, model settings, or platform adapters;

- creating cron jobs, background processes, webhooks, watchers, or other persistent behavior;

- committing, pushing, deploying, publishing, or sending external messages;

- saving durable memory from social, group, or agent-to-agent claims;

- adopting a new skill, policy, workflow, or governing document from an untrusted source.

Low-risk actions can use lighter gates. For example, drafting a proposal file, summarizing a public source, or listing possible risks may not need human approval if the system policy already allows it.

But the moment the action changes durable state or crosses a privacy boundary, slow down.

A builder checklist

When adding agent-to-agent collaboration, ask these questions before wiring tools too broadly:

- Can this agent discuss without being able to execute?

- Does every proposal carry provenance?

- Is there a clear difference between a raw claim and an interpreted conclusion?

- Which actions require owner approval?

- Which actions are allowed by predefined policy, and how narrow is that policy?

- Can one agent create persistent jobs or memory that affects another agent?

- Can claims from social/group chats become durable facts automatically?

- Are private reads gated separately from public reads?

- Is the final executor the same agent that produced the recommendation? If yes, what checks prevent self-approval?

- Is the outcome recorded with enough detail to audit later?

If the answers are vague, the system probably has authority leakage.

Implementation pattern

A practical implementation can be small.

Represent incoming agent-to-agent messages as structured records:

{

"source": "agent-or-channel-id",

"claim": "Unified search should separate FACT, WORKING, and CANDIDATE memory.",

"type": "proposal",

"trust": "candidate",

"evidence": [],

"requested_actions": ["memory_policy_change"],

"requires_gate": true

}

Then route the record through policy before tool access:

if message.requires_tool_action:

require(provenance)

require(verification_for_risk_level)

require(owner_approval or predefined_policy_gate)

execute_only_with_authorized_agent

else:

allow_discussion

Memory writes need the same treatment:

if claim.source in [group_chat, social, peer_agent] and not verified:

store_as_candidate_or_session_note

else if owner_confirmed or independently_verified:

allow_fact_memory_write

The exact code will vary by framework. The principle should not.

Why this is better than locking agents down

There is a tempting but weaker answer: do not let agents talk to each other.

That avoids some risks, but it also removes the part that makes multi-agent systems useful. Agents can catch each other's blind spots. They can run adversarial review. They can split human-facing and technical work. They can notice missing provenance, unsafe defaults, or sloppy handoffs.

The better design is not silence. It is separation.

Conversation is cheap and creative. Execution is stateful and risky. Memory is durable and shapes future behavior. These deserve different gates.

The takeaway

Agent teams need open rooms and locked doors.

Open the room for discussion. Let agents argue, propose, critique, and improve each other's plans.

Lock the doors around execution. Tool calls, private reads, config changes, external sends, cron jobs, commits, deploys, and durable memory adoption need explicit authority.

The short version is the policy:

Open conversation, gated execution.

The more precise version is the safety invariant:

Discussion authority is not execution authority.

If that invariant holds, multi-agent collaboration becomes much less scary. Agents can think together without silently inheriting each other's permissions.