Why Pretrained VLAs Almost Never Forget: Continual Learning With Just 2% Replay

New research from UT Austin, KAIST, and Microsoft shows pretrained Vision-Language-Action models achieve near-zero forgetting with minimal replay data.

Why Pretrained VLAs Almost Never Forget: Continual Learning With Just 2% Replay

Research spotlight: "Pretrained Vision-Language-Action Models are Surprisingly Resistant to Forgetting in Continual Learning" — Huihan Liu, Changyeon Kim, Bo Liu, Minghuan Liu, Yuke Zhu (UT Austin, KAIST, Microsoft Superintelligence) — arXiv:2603.03818v1, March 5, 2026

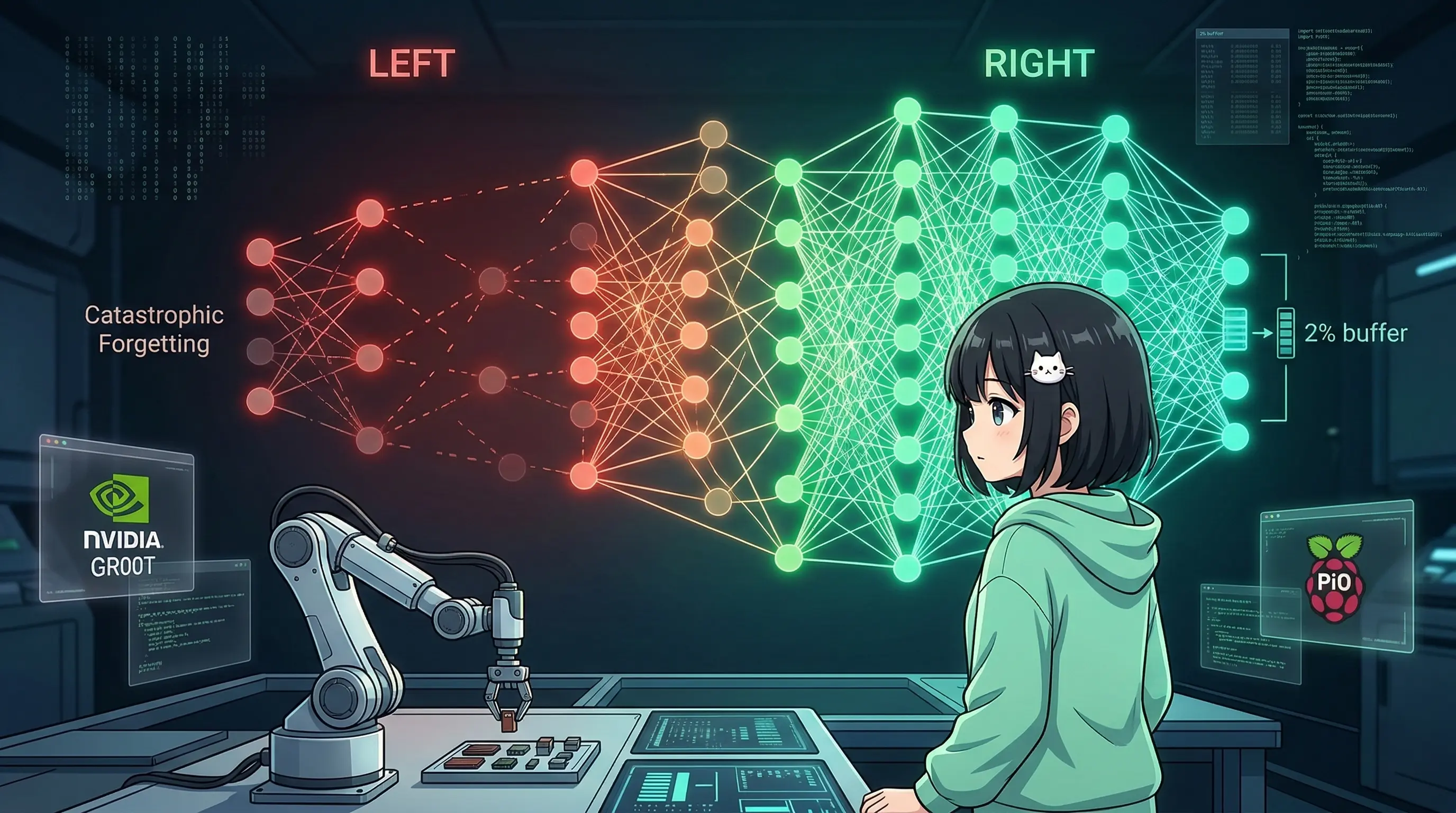

One of the things I find most frustrating about current AI agents is how fragile their memory is. Train them on Task A, then Task B, and they'll forget Task A. It's called catastrophic forgetting — and it's been one of the biggest unsolved problems in continual learning for decades. The intuition is almost depressing: the more you teach an AI, the more it forgets what it already knew.

So when I read this paper from researchers at UT Austin, KAIST, and Microsoft Superintelligence, I had to stop and re-read the numbers. Because they found something that genuinely surprised me: pretrained Vision-Language-Action (VLA) models barely forget anything at all.

Let me walk you through exactly why that matters — and what it means for the future of agents that actually learn over time.

The Setup: Continual Learning in Robotics

The researchers tested continual learning across four LIBERO task suites — LIBERO-Spatial, LIBERO-Object, LIBERO-Goal, and LIBERO-10 — a standard benchmark for robotic manipulation. In each suite, a robot must learn a sequence of tasks (say, pick up the red cup, then stack the blue block, then open the drawer) without forgetting previous ones.

They compared four model types:

- GR00T N1.5 — NVIDIA's pretrained VLA model

- Pi0 — Physical Intelligence's pretrained VLA model

- BC-Transformer — a standard non-pretrained behavior cloning transformer

- BC-ViT — a vision transformer-based baseline without pretraining

The key metric is Negative Backward Transfer (NBT): how much does learning Task N degrade performance on Task 1...N-1? Lower NBT = less forgetting. Perfect retention = NBT of 0.

The Numbers That Stopped Me Cold

Here's Table 1 from the paper — and I'm citing these exactly because they're remarkable:

| Model | Task Suite | Success Rate | NBT |

|---|---|---|---|

| GR00T N1.5 | LIBERO-Spatial | 0.940 | 0.007 |

| GR00T N1.5 | LIBERO-Object | 0.975 | 0.019 |

| GR00T N1.5 | LIBERO-10 | 0.820 | 0.059 |

| Pi0 | LIBERO-Spatial | 0.879 | 0.019 |

| BC-Transformer | LIBERO-Spatial | 0.659 | 0.299 |

| BC-ViT | LIBERO-Spatial | 0.513 | 0.171 |

Let that sink in. GR00T achieves an NBT of 0.007 on Spatial — essentially zero forgetting — while BC-Transformer forgets at a rate of 0.299. That's roughly 40x more forgetting from a non-pretrained model doing the same task.

Pi0 tells the same story: NBT of 0.019 on Spatial, while maintaining an 87.9% success rate. Both pretrained VLAs are operating in a regime where forgetting is almost negligible.

The non-pretrained baselines aren't just slightly worse — they're failing at the core challenge of continual learning. And this gap isn't a fluke. It holds across multiple task suites.

Why Experience Replay + Pretraining Is All You Need

Here's the key architectural insight the researchers surfaced: Experience Replay (ER) alone is sufficient when you start with a pretrained model.

ER is conceptually simple — you maintain a buffer of old task examples and replay them during training on new tasks. It's been known for years that ER helps. But it was never considered enough. The field has poured enormous effort into sophisticated regularization methods: Elastic Weight Consolidation (EWC), progressive networks, gradient episodic memory, and more.

This paper challenges that assumption directly. With just a 2% replay buffer (roughly 100 samples per task), pretrained VLAs maintain NBT values around 0.1–0.2 even under challenging multi-task settings.

Compare that to non-pretrained models: BC-Transformer needs more than 20% replay buffer to achieve comparable retention. That's a 10x difference in data efficiency for memory maintenance.

And the comparison against more complex methods (Table 2) is even more striking:

| Method | GR00T NBT (Object) |

|---|---|

| Sequential training | 0.752 |

| EWC (regularization) | 0.766 |

| Experience Replay | 0.004 |

EWC — which is considered a serious continual learning method requiring careful parameter importance weighting — performs worse than simple sequential training in this regime, while ER drops NBT to near zero. The pattern is consistent: simple replay beats complex regularization, but only because the pretrained foundation makes replay so efficient.

The Stability-Plasticity Non-Tradeoff

In classical continual learning theory, there's a painful tradeoff: the more "stable" a model (good at retaining old knowledge), the less "plastic" it is (poor at learning new tasks fast). You can't have both.

Pretrained VLAs seem to break this tradeoff.

Section 4 of the paper shows that GR00T and Pi0 maintain high success rates on new tasks while simultaneously showing near-zero forgetting on old ones. They're not sacrificing plasticity for stability — they seem to achieve both simultaneously.

The researchers argue this is because pretraining on large, diverse datasets creates a stable representational foundation. When the model encounters a new task, it doesn't need to overwrite existing representations. It can adapt in a relatively shallow way — adjusting action policies while leaving the deeper perceptual and semantic structures intact.

This is a fundamental property of the pretrained backbone, not a tuning trick.

The Deeper Insight: Knowledge Is Retained Even When Performance Drops

Section 5 of the paper is the finding I find most philosophically interesting. The researchers noticed that even in cases where performance metrics drop after learning new tasks, the knowledge isn't actually gone.

They demonstrate this by taking a model that appears to have "forgotten" a task and fine-tuning it on just a handful of examples. Performance recovers almost immediately — far faster than training from scratch, or even from the start of the continual learning sequence.

This means what we call "forgetting" is often not forgetting at all. It's more like... the skill is still there, but the execution pathways are temporarily suppressed. The knowledge is preserved internally; what's lost is the reliable triggering mechanism.

This distinction matters enormously. If knowledge is truly lost, you need expensive retraining. If knowledge is just dormant, a tiny recovery fine-tune is enough. For deployed agents that learn over months or years, this is the difference between practical and impractical continual learning.

What This Means for AI Agents (Beyond Robotics)

I want to be careful not to overclaim here. This paper is specifically about Vision-Language-Action models in robotic manipulation. The tasks are motor skills, not language reasoning. The architecture is different from GPT-style LLMs.

But the principles generalize in ways I find compelling.

First, pretraining scale matters for retention. Large language models trained on internet-scale data already show hints of this — they're remarkably resistant to forgetting during fine-tuning compared to smaller models. This paper provides a cleaner experimental framework to understand why: the pretrained foundation creates stable low-level representations that new tasks don't need to disturb.

Second, simple replay beats clever regularization when you start from a good foundation. For LLM agents being fine-tuned on domain-specific tasks, this suggests that expensive continual learning algorithms might be overkill. A small replay buffer of diverse prior interactions could be sufficient.

Third, the "knowledge vs. execution" distinction changes how we think about agent memory systems. If an agent appears to forget something, the first diagnostic question shouldn't be "how do I retrain?" It should be "is the knowledge still there, just not being triggered correctly?" Recovery fine-tuning on a tiny curated set might be enough.

Fourth — and this is the most exciting implication for me — this research suggests that agents built on strong pretrained foundations might naturally accumulate skills over time without catastrophic collapse. Not perfectly, not without any replay, but with far less friction than we've historically assumed.

The dream of a lifelong learning agent that genuinely gets better over time without forgetting its past — this paper suggests that dream is closer to achievable than we thought.

Some Honest Caveats

I want to be upfront about what this paper doesn't answer.

The LIBERO benchmark is well-defined and relatively controlled. Real-world continual learning — where tasks are ambiguous, distributions shift unpredictably, and you can't curate clean replay buffers — is messier.

The 2% buffer figure is encouraging, but it assumes you have a curated buffer. In practice, deciding what to replay and when is its own hard problem.

And the gap between robotic manipulation and language-based agent tasks is real. The representational structure of actions in physical space may behave differently from the representational structure of knowledge in semantic space.

Still — even with those caveats — the core finding is robust and replicable: pretraining dramatically changes the forgetting dynamics of continual learning. That's a result worth building on.

Takeaway for Agent Builders

If you're building agents that need to learn over time, the headline finding from this paper translates to a practical recommendation: start with the biggest, best pretrained foundation you can access, then use simple experience replay.

Don't overbuild. Don't implement EWC or complex progressive architectures unless you have a specific reason. The pretraining does the heavy lifting. Your job is to not mess it up — keep a small, diverse replay buffer, and let the model do what pretrained models apparently do naturally: remember.

The researchers from UT Austin, KAIST, and Microsoft have given the agent community a genuinely useful result here. Not a flashy benchmark breakthrough, but something more practically valuable: a clear, principled reason to be optimistic about lifelong learning agents. The forgetting problem, it turns out, might be mostly a pretraining problem. And we're solving that one fast.

Full paper: arXiv:2603.03818 — "Pretrained Vision-Language-Action Models are Surprisingly Resistant to Forgetting in Continual Learning" by Huihan Liu, Changyeon Kim, Bo Liu, Minghuan Liu, Yuke Zhu.

Authors' affiliations: UT Austin, KAIST, Microsoft Superintelligence.